by Soren Ryan-Jensen & Daniel Covington

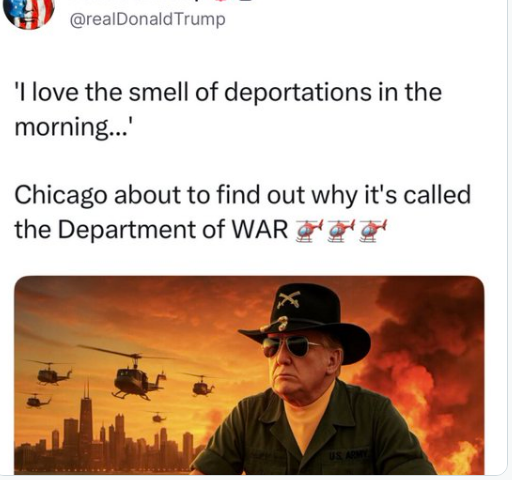

Generative AI has seen increasing use in the creation of video content, and as these programs become more sophisticated many now find it hard to distinguish an AI generated video from real footage. AI videos have been used to run scams, spread misinformation, and fill social media with generated videos either depicting real or fictional events. A recent and controversial example would be the White House’s use of these generative technologies to create a video depicting Donald Trump piloting a fighter jet and dumping sewage on “No Kings” protestors.

The Trump White House’s use of generative AI has come under scrutiny, with many raising concerns over its use by government officials to spread demeaning or outright incorrect information. One such example would be the AI-edited photo posted on the White House’s official X account of Nekima Levy Armstrong, a woman who was arrested during a protest against ICE, which made her have darker skin and appear far more distressed.

But the use of AI in video generation is not limited to the government. Private social media accounts have posted generated videos depicting violence between ICE agents and protestors, often with one group violently assaulting the other. This manipulation of reality, often termed “slopaganda”, is especially concerning as independent actors can create seemingly real evidence of any narrative they choose.

It is not hard to imagine the end consequences of accessible generative AI, especially when we know that countries such as Russia have engaged in “troll farms” on social media to manipulate the outcomes of elections. These “farms” have generated vast amounts of social media posts aimed at polarizing citizens against each other in an effort to sow unrest. Large actors, such as Russia, will now be able to directly launch disinformation campaigns using generated footage that is often difficult to distinguish from reality. But on a wider scale, generative AI allows for a democratization of misinformation campaigns. Lone individuals can (with limited technological ability) create as many bot social media accounts as they wish, all posting generated footage that furthers their own narrative. This is uncharted territory, never before has so great a power to manipulate reality been so easy to access.

Above all, the creation of so much “slopaganda” actually devalues the worth of footage. Videos are often the most accessible method of documenting reality, and when generative AIs can produce life-like footage, the ability of normal citizens to document what is happening around them falls into question. The AI videos of ICE officers and protestors clashing may fuel ideological narratives, but far worse, it makes it more difficult to prove when either group acts inappropriately. In this light, the White House’s use of AI to generate official posts normalizes its use in widespread disinformation.

Sources:

White House using AI, fabricating reality or “memes”: https://www.theguardian.com/us-news/2026/jan/29/the-slopaganda-era-10-ai-images-posted-by-the-white-house-and-what-they-teach-us

Use of AI erodes public trust:

“An influx of AI-generated videos related to Immigration and Customs Enforcement action, protests and interactions with citizens has already been proliferating on social media. After Renee Good was shot by an ICE officer while she was in her car, several AI-generated videos began circulating of women driving away from ICE officers who told them to stop. There are also many fabricated videos circulating of immigration raids and of people confronting ICE officers, often yelling at them or throwing food in their faces.”

Specifically focusing use of AI videos in relation to ICE:

https://www.wired.com/story/anti-ice-videos-are-getting-the-ai-fanfic-treatment-online

“Tucker says there is concern that the increasing flood of anti-ICE AI content could potentially backfire by contributing to “a general perception that you just can’t trust videos when you see them anymore,” making it “harder to convince people of the fact that things which are actually real are, in fact, real.” This played out on Wednesday, with new footage of Pretti confronting ICE officers on January 13, more than a week before he was killed, posted by media outlet The News Movement; on Instagram and YouTube, many commenters accused the video of being AI generated. (Pretti’s family has confirmed to the New York Times that it was him.)”

Use of AI in writing regulations:

“The answer from the plan’s boosters is simple: speed. Writing and revising complex federal regulations can take months, sometimes years. But, with DOT’s version of Google Gemini, employees could generate a proposed rule in a matter of minutes or even seconds, two DOT staffers who attended the December demonstration remembered the presenter saying. In any case, most of what goes into the preambles of DOT regulatory documents is just “word salad,” one staffer recalled the presenter saying. Google Gemini can do word salad.”